This verifies that for every sale, the customer who purchased a product, the product sold, time and location of the sale is recognized

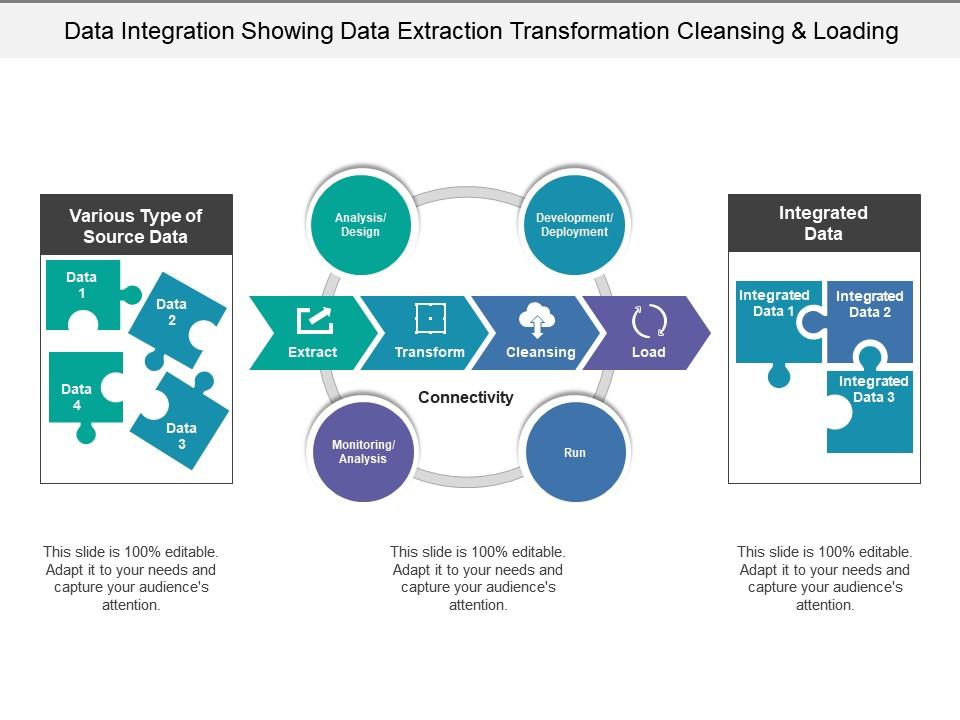

This is done to ensure that all the records in the fact table are related to the records in the dimension table.įor example, if a fact table of product sales is to be related to the dimension tables for products, time, and customers, then for each sale record of the product there must be a record in each dimension table that relates to the sale record routed through the primary keys. Referential integrity between the dimensions and facts is verified.Checks to be performed after loading data The data loaded into a data warehouse is made presentable to the users. Dimensional Model: Facts, Dimensions, OLAP cubesĪfter the data has been cleansed and converted into a structure adhering with the data warehouse requirements, data is ready to be loaded into a data warehouse.Relational tables: Metadata, DBA support, SQL interface.Flat files : Fast to write, append to, sort and filter.The staging area consists of both RDBMS tables and data files.Only the ETL processes can read or write the staging area.Reports cannot be accessed in the staging area.Users are not allowed access to the staging areas.The data can be reloaded from the staging tables without going back to the sources in cases of any environmental failures.The data then undergoes several filters and transformations.The source data snapshots are compared with the previous snap shots to identify the newly inserted or updated data.The data staging area owned by the ETL team is the intermediate area in a data warehouse where the transformation activities take place.Naming conflicts resulting due to homonyms.Structural conflicts where the objects have different representations or conversion types.Transformation deals with Schema-level problems The data is transformed to a format that adheres to the business rules. It has to undergo few conversions since the source systems are not similar in nature. The data extracted from the source systems is not in a usable format. Queryable sources – provides query interface.Snapshot sources – offers only full copy of source.Internal action sources –only internal actions when changes occur Non -cooperative Sources.Call back sources – calls external code when changes happen.Replicated sources – the published mechanism.Typically it is in the form of SQL Types of sources Cooperative Sources The transformation column consists of the conversion rule that needs to be applied on the source column to achieve the target column.The content of the logical data mapping document is a critical component of the ETL process The logical data mapping illustrated shows the source and target columns.Extraction is mostly performed by COBOL routines but it is not recommended due to its high program maintenance.Every data source has its specific set of characteristics that need to be managed and consolidated into the ETL system in order to extract data Data is extracted from heterogeneous sources.It takes place at idle times of the business, preferably at night The extraction process determines the correct subset of source data that is used for the further process of extraction. The ETL process converts the relevant data from the source systems into useful information to be stored in a data warehouse.It is not a onetime process since new data is added to the data warehouse periodically – monthly, daily, hourly, and sometimes on adhoc basis.ETL is a complex consolidation of process and technology that consumes an important portion of the data warehouse development efforts and depends on the skills of the business analysts, database designers, and application developers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed